Welcome to EasifyMe!

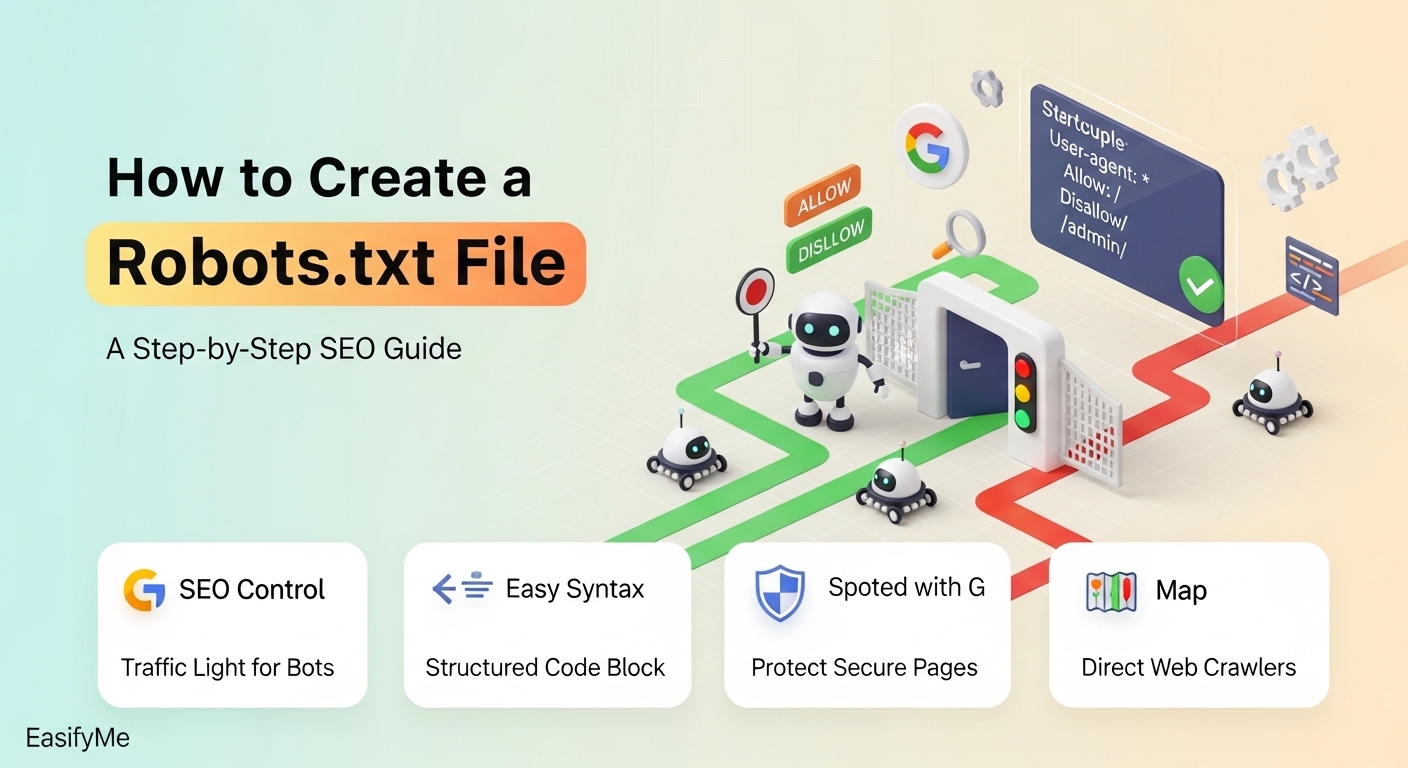

Welcome to EasifyMe! If you’re looking to control how search engines crawl your website and protect your private files, this guide will show you exactly how to do it in seconds without spending a dime. Learning how to create robots.txt is one of the most powerful moves you can make to improve your site's technical health and search visibility.

A robots.txt file is a simple text file that lives on your server and acts as a set of "house rules" for search engine bots like Googlebot. By using a robots.txt guide for beginners, you can ensure that Google focuses its energy on your high-quality content instead of wasting time on admin folders or duplicate pages. Our robots.txt generator tool makes this technical task effortless for everyone.

Quick Overview: Robots.txt Rules at a Glance

| Directives | What It Does | Best Used For |

|---|---|---|

| User-agent | Target specific bots (e.g., Googlebot) | Specific bot instructions |

| Disallow | Tells bots NOT to crawl a folder/page | Admin panels, private files |

| Allow | Tells bots they can crawl a specific file | Sub-folders within blocked areas |

| Sitemap | Provides the location of your sitemap | Speeding up discovery |

Why You Need a Robots.txt File Right Now

Every website has a "Crawl Budget." This is the limited amount of time and resources Google allocates to explore your site. If your website is cluttered with internal search results, temporary folders, or backend scripts, bots might leave before they ever find your latest blog post.

In 2026, search engines are more selective than ever. Using robots.txt for seo allows you to point the bots in the right direction. It’s not just about blocking; it’s about efficiency. If you don't tell the bots where to go, they might spend their entire budget on "junk" pages, leaving your important pages unindexed.

Furthermore, privacy is a major concern. You don't want your login pages or sensitive PDF documents showing up in public search results. A well-structured website robots.txt example acts as your first line of defense, keeping the "prying eyes" of search bots away from areas that aren't meant for the public.

Step-by-Step: How to Create a Robots.txt File

You don't need to be a programmer to handle this. We've designed our tools at EasifyMe to take the complexity out of the equation. Follow these simple steps to get your file live today.

Step 1 – Identify What to Block

Before using a robots.txt generator tool, look at your site structure. Identify folders like /wp-admin/, /temp/, or /cgi-bin/. These are classic examples of what most webmasters choose to block pages with robots.txt.

Step 2 – Use the EasifyMe Robots Generator

Navigate to our Free SEO Utilities and select the Robots.txt Generator. Our tool makes this step effortless. Simply toggle the options for the bots you want to allow or disallow. It will generate the allow disallow robots.txt syntax automatically in real-time.

Step 3 – Add Your Sitemap Link

One of the best xml sitemap optimization tips is to include your sitemap URL at the very bottom of your robots.txt. Our generator has a dedicated field for this. It tells Google: "While you're checking my rules, here is the map to my entire site."

Step 4 – Save and Upload

Download the generated text as a robots.txt file. Using an FTP client or your hosting file manager, upload it to the root directory of your website (usually the public_html or www folder). You can verify it by visiting yourdomain.com/robots.txt.

Robots.txt for Different Platforms

| Platform | Common Robots.txt Need | Ease of Setup |

|---|---|---|

| WordPress | Block /wp-admin/ and /trackback/ | Very Easy |

| Shopify | Automatically managed (mostly) | Limited Control |

| Custom HTML | Requires manual file upload | Full Control |

| Wix/Squarespace | Built-in system settings | Easy but Restricted |

Expert Insights: High-EEAT Robots.txt Management

Experience tells us that a small mistake in your robots.txt can de-index your entire site. A technical seo robots.txt guide must emphasize the "No-No's." For instance, never use Disallow: / unless you want to disappear from Google entirely!

Expert tip: Use the "User-agent: *" rule to apply general rules to all bots, then provide specific instructions for "User-agent: Googlebot" if you want Google to treat your site differently than Bing or DuckDuckGo. This level of control is what separates beginners from pros.

Common Pitfalls to Avoid

- Blocking CSS/JS: In 2026, Google needs to "see" your site like a human. If you block your styling files, Google will think your site is broken and lower your rankings.

- Case Sensitivity: Remember that

/Admin/and/admin/are different. Always double-check your folder names. - Using it for Security: Robots.txt is a "handshake" agreement. Malicious bots will ignore it. Use our Developer Tools to learn about real security headers.

Tailored Robots.txt Solutions for Every User

For Developers 💻

When working on a staging or "dev" site, use Disallow: / to prevent search engines from indexing your unfinished work. Use our technical seo robots.txt guide logic to ensure that once you go live, you swap it back to an optimized version. Check our [Link to EasifyMe Developer Tools] for more server-side utilities.

For Content Creators ✍️

Ensure your robots.txt for wordpress website isn't blocking your "Uploads" folder. If you block /wp-content/uploads/, your images won't show up in Google Image Search, killing a huge traffic source. Use our EasifyMe Image Editor to compress those images so they rank even better.

For Small Businesses 📈

Keep it simple. A standard website robots.txt example that allows all bots but hides your backend is usually enough. Focus your energy on your sitemap link within the file to help customers find your service pages faster.

Frequently Asked Questions (FAQs)

What does a basic robots.txt file look like?

A standard website robots.txt example usually starts with User-agent: * followed by Disallow: /wp-admin/. This tells all bots they are welcome, except in the administrative backend. It is the most common setup for 90% of small websites.

How do I test if my robots.txt is working?

The best way is to use the Robots.txt Tester in Google Search Console. It will highlight any errors or robots.txt rules explained incorrectly. You can also manually check it by typing your URL followed by /robots.txt in your browser.

Can robots.txt remove a page from Google?

Not exactly. Robots.txt prevents crawling, but if other sites link to that page, Google might still index it. To fully remove a page, you need a "noindex" meta tag. Use our SEO Tools to learn about meta robots tags.

Where do I put the robots.txt file?

It must always go in the root directory of your domain. For example: https://easifyme.com/robots.txt. If you place it in a sub-folder like /blog/robots.txt, search engine bots will completely ignore it.

How do I block a specific folder?

Use the Disallow rule. For example, to block a folder named "private," you would write: Disallow: /private/. This is the simplest way to block pages with robots.txt that contain sensitive or irrelevant data.

Does every website need a robots.txt?

Technically, no. If you don't have one, bots will crawl everything they find. However, for robots.txt for seo, it is highly recommended to have one to guide bots toward your most important content and manage your crawl budget effectively.

Easify Your Technical SEO Today

Managing your website shouldn't feel like a chore. By mastering how to use robots.txt file directives, you take the driver's seat of your SEO journey. It’s about being the boss of the bots and making sure they see your website's best side.

Ready to build yours? Use our Free Robots.txt Generator right now and get your site optimized in under a minute. While you're there, browse our EasifyMe SEO Tools to check your site's health and performance. We make tech easy!

Your site, your rules. Get started now!

Disclaimer: EasifyMe.com provides these tools and guides for informational purposes. Improper use of robots.txt can lead to site de-indexing. Always test your file before final implementation.